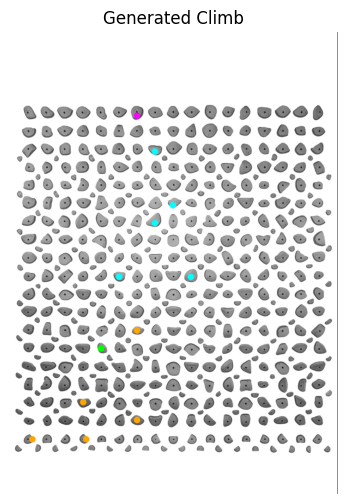

About the project

The Kilter Board is an LED-lit climbing wall with hundreds of holds — climbers pick a route, the board lights up the relevant holds in colors (start, foot, hand, top), and the climber sends. There are tens of thousands of community-shared routes, but generating new ones is still done by hand.

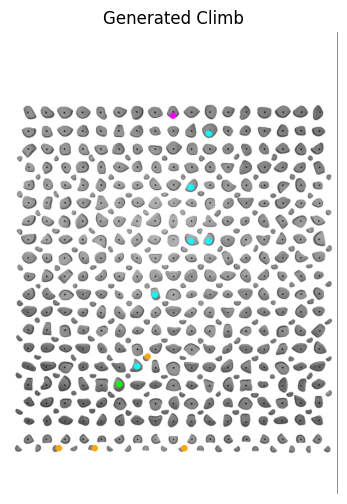

Kilter AI learns to do this automatically. Given the board layout, a target difficulty, and the wall angle, it generates a brand-new climbing route by predicting which holds to light up and in what role (hand, foot, start, finish).

To train it, we built a scraper that pulls real climbs from the Kilter Board mobile app, processes the LED state into a structured dataset, and feeds it through a stack of recurrent models — eventually settling on a multi-task RNN that predicts hold position and hold role jointly. Side experiments include a Markov chain and a VAE for comparison. Work done with Dr. Amir Ghasemkhani at CSULB.

How it works

- Scrape. An image-based scraper drives the Kilter Board app, captures the rendered board for thousands of community climbs, and reads the per-hold LED color to recover (row, column, role) for every active hold. [show scraper]

- Index the board. The physical layout of holds is mapped into a discrete grid so the model can predict "hold #157" instead of pixel coordinates. [show layout]

-

Train. A stack of RNN variants (12 generations from

RNN1toRNN15) — each iteration adds a prediction head: position → role → angle → sequence order. Loss is class-weighted to compensate for the heavy imbalance between hand and foot holds. - Sample. At inference the model emits a sequence of (position, role) pairs until the route closes on a top hold. The generator is conditioned on a target grade and wall angle.

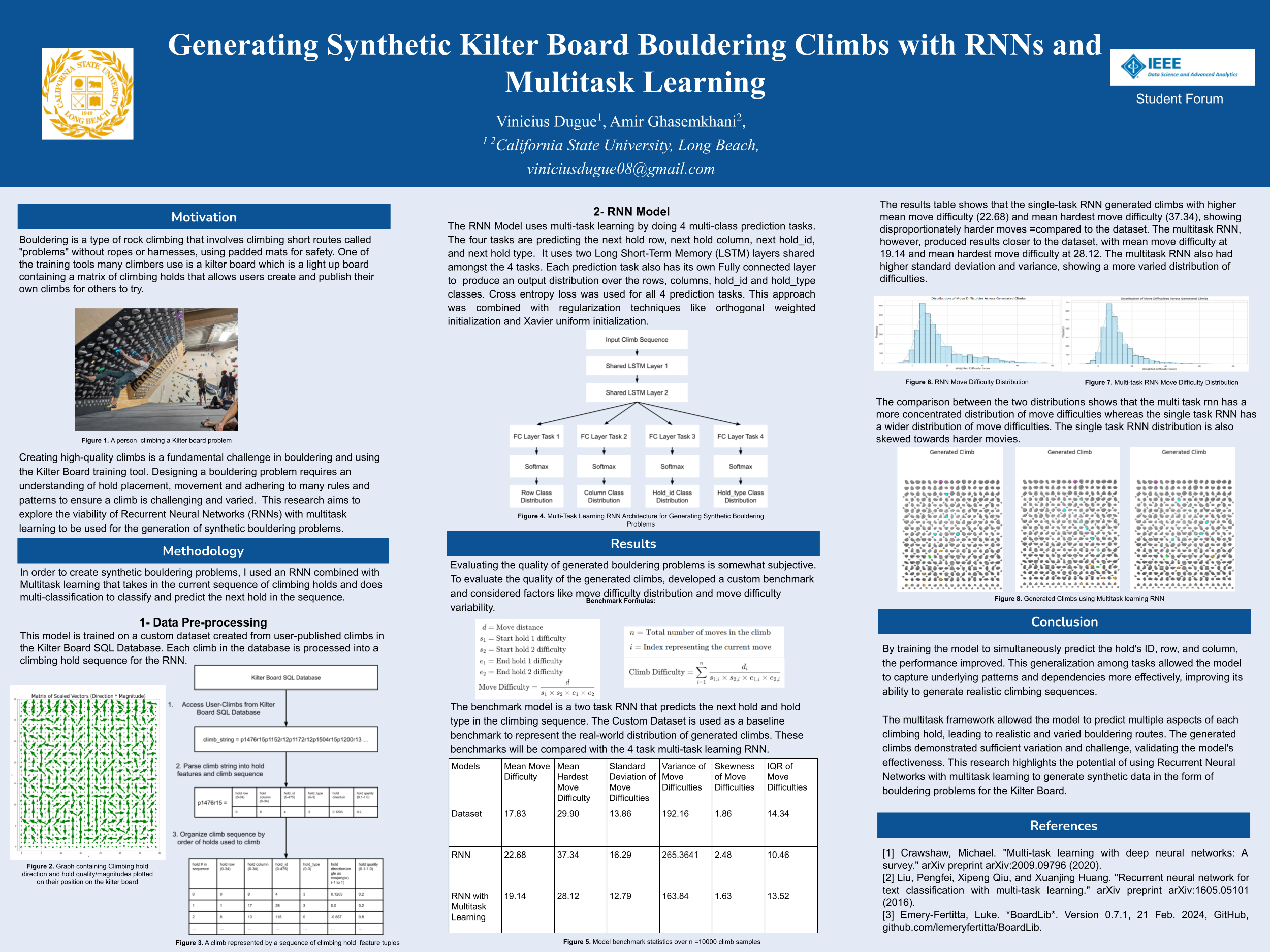

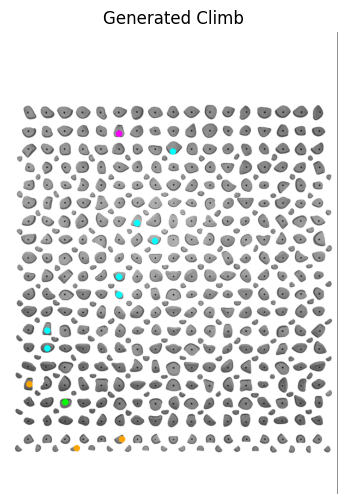

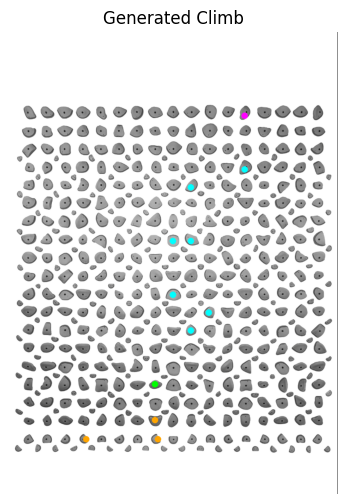

Generated climbs

Four routes the model produced. Cyan = handholds, magenta = top, orange = start, green = feet — matching the Kilter Board's own color convention.

Conference poster